Pensamiento Balanceado: Modelo de distribución de carga cognitiva en equipos colaborativos Humano-IA

.

Estudios en Ciencias Sociales y Administrativas de la Universidad de Celaya (agosto-diciembre, 2025), Vol. 14, Núm 1, 57-81.

Artículo recibido: 11/11/2025. Artículo aceptado: 11/12/2025

Jesús Evert Corral Pedraza

Universidad de Celaya

México[1]

Abstract

Modern organizations increasingly rely on human-AI collaborative teams to handle complex tasks. Yet human cognitive capacity is limited, and team performance declines when collective load exceeds members’ capacities. This study combines Cognitive Load Theory with Neuroscience-based Thinking Styles to propose an AI-mediated framework that monitors and balances team cognitive load. The framework uses behavioral proxies to infer load and reassign tasks via BDI-informed matching. A two-tier AI architecture adapts and modulates task allocation based on each member’s cognitive style and momentary effort; the result is a dynamic “cognitive load balancer” that keeps each person’s effort in a sustainable range by routing tasks to appropriate thinkers. Beyond performance, the paper considers ethical and organizational implications. A complete Python simulation demonstrates feasibility and provides a foundation for further testing and interdisciplinary research.

Keywords: neuroscience, thinking styles, cognitive load, work teams, AI agents, AI

JEL Classification:M12, M15, M54

Resumen

Las organizaciones modernas dependen cada vez más de equipos colaborativos humano-IA para abordar tareas complejas. Sin embargo, la capacidad cognitiva humana es limitada, y el rendimiento del equipo disminuye cuando la carga colectiva excede las capacidades de sus miembros. Este estudio combina la Teoría de Carga Cognitiva con Estilos de Pensamiento basados en la Neurociencia para proponer un modelo gestionado por IA que supervisa y equilibra la carga cognitiva del equipo. El modelo utiliza indicadores conductuales para inferir la carga y reasignar tareas mediante una correspondencia basada en el BDI. Una arquitectura de IA de dos niveles se encarga de adaptar y regular la asignación de tareas en función del estilo cognitivo y el esfuerzo momentáneo de cada miembro; el resultado es un “balanceador de carga cognitiva” dinámico que mantiene el esfuerzo de cada persona en un rango sostenible dirigiendo las tareas hacia los perfiles cognitivos más apropiados. Más allá de la productividad, el artículo aborda implicaciones éticas y organizacionales. Se incluye una simulación completa en Python que demuestra la viabilidad del modelo y proporciona una base para futuras pruebas e investigaciones interdisciplinarias.

Palabras clave: neurociencias, estilos de pensamiento, carga cognitiva, equipos de trabajo, agentes de IA, IA

Clasificación JEL:M12, M15, M54

Thinking in Balance: Framework for cognitive load distribution in Human-AI collaborative teams

Corral Pedraza, J. E.

1. Introduction

The rise of human-AI teaming, from AI assistants on software teams to robot collaborators in factories, is transforming how work gets done. Current frameworks for human-AI collaboration are evolving to reflect the nuanced interaction between people and intelligent systems. Rather than viewing AI as a tool or substitute, modern models emphasize hybrid teams in which humans contribute creativity, ethics, and context, while AI provides scale, pattern recognition, and automation. Table 1 shows the main features of these models, which primarily focus on the balance between technological autonomy and human control.

Table 1

Current frameworks for Human-AI collaboration

| Framework | Key Features |

| Collaborative intelligence | Humans and AI systems form teams, each leveraging their strengths to complement the other rather than compete. The approach focuses on achieving outcomes neither could accomplish alone by combining human intuition/judgment with AI’s efficiency (Daugherty & James, 2019). |

| Human-Centered AI | A two-dimensional framework for augmented AI design that seeks high human control and high automation simultaneously. Emphasizes safety, transparency, and human values in AI to create technology that augments rather than automates humans (Shneiderman, 2022). |

| Levels of autonomy | A taxonomy of human-automation collaboration defined as 10 levels from full human control to full machine autonomy. At Level 1 the computer offers no assistance (human does everything), up to Level 10 where the system makes decisions and acts entirely autonomously without human involvement or even notification (Lui, 2024). |

| Augmented intelligence | IBM’s approach where AI systems are designed to enhance human intelligence and decision-making, not replace it. Emphasizes human-AI partnership: AI provides insights, recommendations, and automation of routine data analysis, while humans provide judgment, domain knowledge, and final decisions (IBM, 2024). |

Source. Own work for this study.

Although AI can offload routine tasks or provide decision support, human partners still face limits of working memory and attention (Sweller, 1988). When team task demands climb, cognitive overload can degrade performance (analogous to system overload leading to failure). Yet most cognitive load research has treated it as an individual phenomenon. In practice, teams face a team cognitive load: the sum of all members’ mental effort, which if unchecked impairs collective effectiveness. Moreover, team members differ in thinking style[1] (their preferred ways of processing information) so the same task may be cognitively light for one individual yet burdensome for another. This individual variability is often overlooked in task assignment.

A gap can be identified: while Cognitive Load Theory (CLT) tells us to avoid overloading an individual’s working memory, it rarely addresses how to distribute work across a cognitively diverse team or adapt interfaces in real time. Similarly, Thinking-Style model (Herrmann, 2015) describes what people prefer to think about, but not how to use that knowledge to balance load dynamically. Our objective is to integrate these perspectives into a unified framework. This study proposes an AI-driven load management model that assigns tasks in a BDI[2]-informed way (aligning tasks to people’s cognitive quadrant preferences) and continuously rebalances assignments as workload and complexity change. The AI also adjusts its communication style and frequency to reduce extraneous load. Concretely, the paper outlines an AI architecture with a high-level Planner (Coordinator) and a low-level Executor (Manager) that together monitor team metrics and intervene to redistribute cognitive work.

The contributions of this study are twofold. First, it offers a framework (grounded in CLT and cognitive neuroscience) for understanding load in mixed human-AI teams. This research combines insights on intrinsic/extraneous/germane load (Brown, 2024), thinking styles (Herrmann, 2015) and team diversity benefits (Hong & Page, 2004; Aggarwal et al., 2019) to characterize a new problem space. Second, an adaptive AI architecture for load balancing was designed: it monitors lightweight interaction cues, learns teammates’ cognitive profiles, and reallocates tasks or modulates help dynamically. This positions AI not just as a tool, but as a cognitive facilitator that augments team cognition. This work advances human-AI teaming theory by explicitly connecting individual neurocognitive factors to real-time coordination strategies and has practical implications for designing collaborative platforms that keep teams within optimal cognitive bounds. In addition, complete code for a simulation was developed to demonstrate the framework’s feasibility, thus laying the groundwork for future empirical testing and implementation.

2. Background and theoretical foundations

2.1. Cognitive Load Theory

CLT posits that working memory has limited capacity, so task design must consider mental effort. Sweller (1988) showed that problem-solving tasks consume processing capacity, leaving less room for schema learning. CLT breaks load into intrinsic vs extraneous components (Clark & Mayer, 2016): intrinsic load is the inherent complexity of the task, while extraneous load comes from how information is presented. There is also germane load, the effort used to form new schemas and strategies. Table 2 shows an example of CLT’s components.

Table 2

Cognitive Load Theory components

| Type | Description | Example |

| Intrinsic | Mental effort inherent to the task complexity | Solving calculus problems |

| Extraneous | Inefficient load from poor presentation | Cluttered slides, distracting animations |

| Germane | Effort devoted to schema formation (learning) | Connecting new ideas to prior knowledge |

| Goal: Minimize extraneous load, manage intrinsic load, and maximize germane load. | ||

Source. Own work, adapted from Sweller, 1988.

In collaborative work, high intrinsic load tasks can overwhelm a person’s working memory, while poor process or communication can add extraneous strain. For example, managing many concurrent threads of discussion (coordination overhead) adds extraneous load. As Clark & Mayer (2016) mention, if the total load (intrinsic + extraneous) exceeds an individual’s capacity, performance drops sharply. Importantly, load is subjective and varies by person. This study leverages CLT to view team cognition as a resource: conceptualized as a finite-capacity system, where overload leads to errors or burnout. Our aim is to let AI manage the inflow of tasks to keep each person’s level within sustainable bounds.

2.2. Brain Dominance Instrument model

The Whole Brain Model (Herrmann, 1989) explains how people think, learn, communicate, and make decisions based on different cerebral preferences. The model proposes that the brain can be conceptualized in four quadrants, each associated with a dominant thinking style. The quadrants are commonly labeled A (Analytical), B (Sequential), C (Interpersonal), and D (Imaginative). An A-style thinker excels at data, logic, and quantitative analysis, relishing structured evidence-based tasks (e.g. algorithm design). A B-style person prefers orderly, step-by-step work and clear procedures (e.g. following a detailed manufacturing protocol). A C-style (people-oriented) person is attuned to narrative, emotion, and group dynamics, thriving on brainstorming and social collaboration. A D-style person likes big-picture, conceptual or creative work, relishing open-ended design challenges or strategic planning.

In connection with this, Herrmann developed the BDI, a tool designed to identify an individual’s brain profile and reveal their cognitive preferences. Everyone has a mix, but often one or two dominant modes (Corral, 2020).

Crucially for load, tasks congruent with one’s dominant quadrant impose lower subjective load: they are “germane” to that person’s strengths. For instance, an A-type thinker will handle heavy data analysis easily, whereas a C-type might find it overwhelming (high intrinsic + extraneous load). Conversely, asking an A-type to role-play customer empathy (a C-domain skill) could cause them to struggle. In practice, this means that when team tasks match members’ thinking modes, the effective load is reduced. This principle is exploited in the proposed model: an AI can assign tasks to those who find them easier. BDI theory thus provides a schema for predicting who should do what, beyond just expertise, it taps into deep cognitive preferences.

2.3. Cognitive diversity and team performance

Ample research confirms that cognitive diversity (having team members with different perspectives or problem-solving approaches) can improve group performance on complex problems. Hong & Page (2004) famously showed mathematically that a team of diverse heuristics can outperform a team of uniformly “best” participants. In more applied studies, groups with mixed thinking styles often tackle a broader range of tasks successfully, because each member brings distinct strengths. However, diversity has limits. Too little diversity means blind spots (the team lacks skills for some problems), while too much can incur coordination costs. Aggarwal et al. (2019) find evidence of an inverted-U effect: moderate levels of cognitive style diversity maximize collective intelligence, but very high diversity can hurt coordination and collective learning.

Balanced cognitive diversity enhances team performance. A six‑year study with the U.S. Forest Service found that balanced teams across the four thinking styles were 66% more efficient and succeeded 70% of the time compared to 30% for less balanced teams (Carey, 1997).

Building on this, the framework favors a balanced cognitive mix. The AI should avoid extreme clustering (e.g. all same-type thinking style people) or misaligned combinations. Instead, the framework aims to maintain a healthy variety of thinking styles working on a project, in line with the “law of requisite variety”. This ensures that the team has the range of cognitive resources needed without overwhelming communication costs. This principle is incorporated into task allocation: the AI will preserve at least some representation from each quadrant and not overload one style, as long as overall workload is managed.

2.4. Human-AI teaming and cognitive adaptation

Recent work emphasizes that adaptive AI can enhance human-AI collaboration by tuning to human cognitive factors. Unlike fixed support systems, an adaptive AI monitors the human’s state and modifies its behavior to fit. For instance, Zhao et al. (2022) argue that AI teammates should “tune their output based on each human partner’s needs and abilities” (learning user preferences, skill level, and current cognitive load) because adapting yields performance gains. They show that when AI anticipates a human’s readiness (for example, knowing someone is fatigued or uncertain), it can slow down, simplify language, or break tasks into smaller steps. Conversely, when a user signals confidence and focus, the AI can step back and avoid redundancy. In practice, their experimental framework taught an AI partner different “support levels”, finding that teams prefer a more proactive communicator under high difficulty, but resent the same chatter on easy problems (Liu et al., 2024).

Other research in human-AI teaming aligns with this: AI agents that estimate user goals, trust, or mental model can choose the right modality. In this context, this suggests that the AI should infer not only who excels at what (BDI style) but also how burdened they are in real time (current load). This “cognitive adaptation” layer makes the AI a flexible collaborator rather than a static task allocator. In summary, adaptive AI work implies that the agents in this framework will model teammates’ mental state and adjust communication strategy to reduce extraneous load (e.g. use simpler language, slower pacing) or increase guidance when intrinsic task load spikes (Zhao, 2022).

3. Method

3.1. Research design

This study followed an exploratory research design that combined literature review and theoretical modeling with computational simulation (Python). The approach integrates principles from Sweller’s CLT (1988) and Herrmann’s BDI (1989) to construct a conceptual and algorithmic framework for cognitive load balancing in human-AI teams.

The process consisted of three main stages:

- Theoretical integration, where foundational constructs (load components, thinking styles, and adaptive teaming) were consolidated into a unified conceptual model.

- Framework design and system modeling, in which the AI architecture, indicators, and allocation mechanisms were formalized.

- Simulation-based validation, where the feasibility of the proposed framework was tested computationally using synthetic data to evaluate whether the dynamic allocation rules achieved balanced cognitive load distribution.

3.2. Literature review and model grounding

The theoretical synthesis was conducted through a review of 43 sources in cognitive psychology, neuroscience, and human-AI collaboration (1988-2025). Key frameworks (CLT, BDI and adaptive AI teaming studies) were cross analyzed to identify compatible mechanisms and variables (Zhao et al., 2022; Liu et al., 2024).

This step grounded the conceptual structure of the framework, defining the core constructs (load indicators, cognitive profiles, diversity balance) and operational assumptions used later in simulation. The resulting model treats cognitive load as a dynamic, distributed resource, influenced both by task–style congruence and by real-time team interactions.

3.3. Simulation

To demonstrate feasibility and internal consistency, a Python-based simulation was developed (using Jupyter Notebook). The simulation modeled virtual team members with different BDI-style profiles and variable baseline capacities. Synthetic tasks of varying complexity and style alignment were generated and assigned through an algorithm implementing the dynamic task allocation engine proposed in Section 5.

The simulation output included load trajectories, task completion rates, and redistribution events, analyzed to verify whether the AI maintained all participants’ load within sustainable ranges. Results supported the framework’s predictive validity and served as a proof-of-concept for adaptive task distribution.

3.4. Validation

The validation strategy followed standard model-based inference logic (Quinn & Moody, 2020):

- Internal validity was examined by sensitivity testing (varying initial load, task mix, and number of participants).

- Construct validity was verified by mapping simulated measures to theoretical constructs from CLT and BDI literature.

- Predictive validity was assessed by whether the simulated AI maintained balanced load under changing task complexity.

4. Conceptual framework: BDI-based load distribution

4.1. The problem space

The framework tackles the problem of cognitive overload in heterogeneous teams. Without intervention, each person either buckles under load (making errors) or idles waiting for pace to slow. Team output suffers, as does morale. The key insight is that task-style mismatches increase subjective load (per CLT). The goal is to detect these mismatches and reallocate or transform tasks so that each member stays near the germane load, engaged but not overwhelmed.

Another aspect of the problem is that task complexity often changes over time. A task that was trivial may become complicated as new requirements emerge. A static initial assignment may become suboptimal. Thus, the framework treats task allocation as a dynamic process. The AI continuously monitors load indicators and, when some member’s cognitive budget nears its limit, it can reroute work or enlist a colleague with spare capacity. Throughout, the AI also provides adaptive support (e.g. hints or pacing cues) to smooth peaks of intrinsic difficulty. This combination of proactive rebalancing and reactive support defines the space addressed in this study: how to maintain team cognition within bounds in the face of varying tasks and diverse minds.

4.2. Style-task alignment matrix

To operationalize thinking styles, a style-task alignment matrix is proposed (see table 3). This matrix categorizes common task types and marks which BDI styles find them most germane. Each cell in the matrix can indicate task friction: how much extra load a given style experience. Tasks in the “preferred” style cells have low friction (lower germane load), while mismatched cells have high friction (added extraneous load as the person fights their non-preferred mode).

This conceptual matrix guides the AI’s initial allocation: assign tasks to people whose quadrants align with the work. It should be noted that this is approximate and domain-specific, but it provides a starting point. Crucially, if the matrix indicates a high-friction match, the AI can flag this as a red-flag for possible overload. The AI might then split the task, provide extra scaffolding, or reassign it if someone better suited is available. The matrix thus encodes the hypothesis of style-load fit matching cognitive preferences to task types minimizes mental load (Hong & Page, 2004).

Table 3

Thinking Style-Task Alignment Matrix, mapping common task categories to optimal

(Strong and Moderate) BDI quadrants

| Task Category | A (Analytical) | B (Sequential) | C (Interpersonal) | D (Imaginative) |

| Quantitative analysis | Strong | Moderate | ||

| Process execution | Moderate | Strong | ||

| Client communication | Strong | Moderate | ||

| Design brainstorming | Moderate | Strong | ||

| Risk assessment | Strong | Moderate | ||

| Conflict resolution | Strong | Moderate | ||

| Project scheduling | Strong | Moderate | ||

| Data visualization | Strong | Moderate | ||

| Innovation workshops | Moderate | Strong |

Source. Own work for this study.

4.3. Cognitive load indicators

The AI must estimate each member’s current cognitive load in a non-intrusive way. The approach relies on behavioral and interaction proxies that correlate with mental effort. Key indicators include:

- Response latency and pace: Slower reply times or hesitation (e.g. long pauses in chat, delayed task completions) often signal heavier processing. If an individual’s answer latency rises, the AI infers increased cognitive burden on their working memory. (Broadly, reaction-time research shows that more complex tasks slow responses.)

- Message and task volume: A surge of back-and-forth messages or many simultaneous threads can indicate coordination complexity. Similarly, a growing list of uncompleted tasks or unresolved issues suggests rising load. If the communication history shows rapid, fragmented exchanges or many open items, the AI suspects overload.

- Error rates and revisions: Frequent mistakes, requests for repetition, or multiple edits on tasks can mark strain.

- Task-switching patterns (action entropy): Rapidly switching contexts incurs a “switch cost” on attention. This can be quantified by action entropy over log traces. Recent work in healthcare found that clinicians’ audit logs showed higher entropy (more varied action sequences) when attention switches occurred (Gkintoni et al., 2025). In other words, multithreading tasks increases unpredictability in action logs, which correlates with cognitive burden. Similarly, the system tracks, for each member, the entropy of their task timeline: spikes indicate they’re juggling too many things at once.

Along with behavioral proxies, cognitive load can be assessed through physiological metrics such as heart rate, respiration, and pupil diameter (Can et al., 2019). Continuous, unobtrusive biosensors, wearables and cameras in digital workspaces complement behavioral and interaction proxies, enabling AI to monitor cognitive states in real time to improve accuracy. AI agents equipped to track physiological hallmarks of load (e.g., increased heart rate or blink rate) could assist teammates more effectively by helping to reduce extraneous load (Kyriakou et al., 2019).

By logging these lightweight cues and feeding them through threshold rules or learning models, the AI maintains a running load estimate for each person. Importantly, this approach avoids intrusive techniques (like bio-signals in EEG, etc.) and works in purely digital collaboration (and eventually, in unobtrusive physiological metrics). As cognitive load fluctuates over time, AI continually updates these indicators in real time rather than treat them as static. If it observes someone defaulting to simpler choices (a sign of decision fatigue) or taking unusually long on a routine task, it infers rising load and triggers rebalancing (Wilson, 2002).

5. System architecture: AI as cognitive load mediator

The proposed AI system acts as a cognitive facilitator embedded in the team’s workflow. It does not replace human judgment but supports it by steering tasks and communications. The high-level architecture follows a hierarchical planning and feedback model (Liu, 2024), similar to the HRT-ML framework: one module (the Coordinator) handles strategic planning, and another (the Manager) handles task-level execution.

5.1. Role of AI in the team

This paper views the AI teammate not as an autonomous decider, but as a monitor and moderator of team cognition. Its roles include assessing team capacity (via load indicators), assigning or reassigning tasks, and adjusting communication style. The AI maintains a task graph (a directed acyclic graph of project subtasks) and a team model (BDI profiles plus load estimates). When new tasks arrive, the AI consults the model to decide who to involve. During execution, it listens: if a team member signals overload (through the defined proxies), the AI intervenes by providing assistance or redistributing work.

Critically, the AI keeps focus on the team’s collective load. The AI’s mission is like a human manager who knows each person’s strengths and watches for burnout, intervening to swap tasks before failure.

5.2. Dynamic task allocation engine

The core of the system is a dynamic task-allocation engine. At each decision point, the AI executes a rebalancing cycle such as:

- Assess current load: using the indicators in 4.3 (cognitive load indicators), compute a load score for each member. If a score exceeds a threshold, that person is considered overloaded.

- Match tasks to thinking styles: consult the style-task matrix (4.2) to score each pending task against each member’s BDI profile. Preferentially assign tasks to those whose dominant style aligns. For example, route numerical analysis tasks to A/B thinkers, and creative storytelling tasks to C/D thinkers, assuming no one is overloaded.

- Shift work from overloaded members: if someone’s load is high, identify tasks assigned to them that others could take. Reassign some tasks to less-burdened teammates (or to the AI agent itself if feasible). Ensure that urgent tasks still get done, perhaps by splitting tasks or enlisting temporary help.

- Maintain diversity balance: check the overall style composition of current tasks. If needed, rotate roles so that multiple thinking styles stay engaged. This avoids extreme clustering and leverages different perspectives (Aggarwal, 2019).

- Protect focus time: if a member is in the middle of high-intrinsic-load work, delay introducing new tasks or queries to them. The AI can queue non-critical assignments or social interruptions until the member’s workload falls back to moderate. This mimics “deep work” best practices.

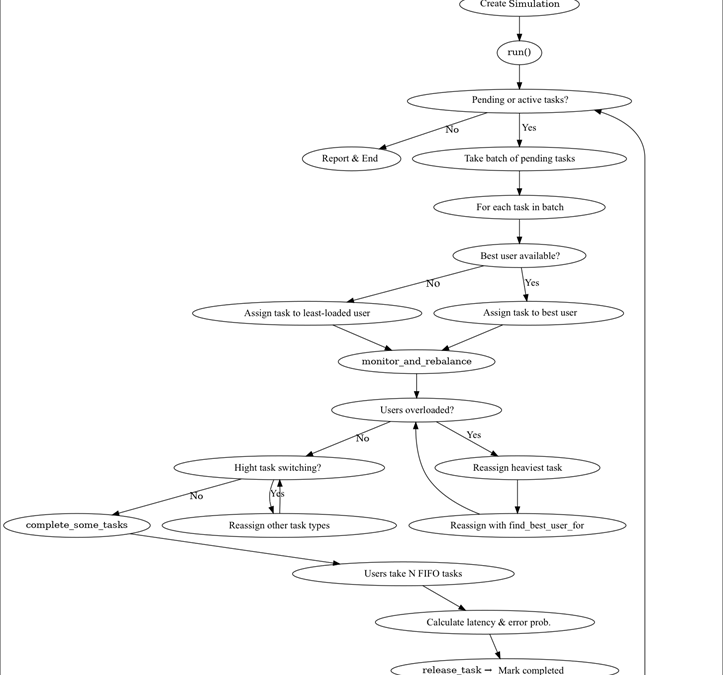

These steps are repeated continuously. In effect, the AI enforces a thinking budget per person (like Kanban’s WIP limit): no individual carries more germane workload than they can handle. Over time, this adaptive matching and shifting forms a feedback loop that keeps everyone’s effort balanced (Rao et al., 2020). This dynamic task-allocation is illustrated in figure 1 as part of the framework’s flowchart.

5.3. Communication modulation and feedback loops

In parallel with task allocation, the AI also modulates communication style and frequency to suit each person’s load and style. Drawing on the two-module architecture (Liu et al., 2024), the coordinator might issue infrequent, high-level guidance, while the Manager provides frequent, step-by-step prompts as needed.

Figure 1

Simplified flowchart of the framework.

Source. Own work for this study.

The AI adjusts how often it chimes in. According to Liu et al. (2024), teams facing harder problems prefer more active, proactive support, but on easy tasks “frequent feedback” is often perceived negatively. Thus, the AI increases its interjections (hints, status checks, clarifications) when task complexity is high, and team feedback indicates uncertainty. Conversely, if it detects that a task is routine or a user is breezing through it, it suppresses unnecessary messages to avoid distraction.

Moreover, the AI tunes its tone, it can use more concrete, structured language with B-types, and more empathetic, example-driven language with C-types. It can offer visual summaries to D-types who prefer big-picture views (Sternberg, 1999). These modulations reduce extraneous load by aligning communications to each person’s processing style and build a feedback loop. Team members’ responses to the AI’s messages inform its model of their style and current load, creating an ongoing human-AI calibration.

6. Theoretical implications and use cases

6.1. Implications for team design

The framework suggests new principles for team composition and design. Traditionally, team formation focuses on skills or knowledge (Corral, 2020); this framework adds thinking styles to the mix. Ideally, teams should be assembled with a diverse but moderate spread of BDI profiles to cover varied task types.

This also allows anticipating overload hotspots. As Zhang et al. (2024) mention, an AI aware of this could proactively recruit additional support or adjust training efforts accordingly. In short, BDI combined with load modeling helps predict where teams might struggle and design around it.

6.2. Implications for human-AI collaboration

Conceptually, the proposed approach recasts AI from a mere tool to a “cognitive equalizer”. It embodies principles of extended and distributed cognition: the team’s collective mind is now partly externalized to the AI agent that manages mental workload (Wang, 2020). Just as a spreadsheet augments memory or a calculator offloads arithmetic, the AI offloads some of the team’s coordination. This aligns with theories that argue cognition can be distributed across people and artifacts (Clark & Chalmers, 1998) – here, tasks are distributed across minds in an optimized pattern.

Practically, this means designing AI teammates not just for competence but for cognitive empathy, tailoring communication to user preferences, and encouraging continuous user feedback to refine AI behavior (Binny et al., 2025). An AI that knows a user’s thinking style and current burden is more respectful of human limits. It avoids the pitfall of interrupting deep focus or insisting on unneeded detail. This human-centered stance may improve adoption: users will trust and accept an AI that “understand their cognitive state and preferences”. Additionally, the study foresees that teams could learn from the AI’s balancing: over time, members might become aware of different style strengths and coordinate better, potentially raising the team’s collective intelligence (Woolley et al., 2010).

6.3. Application domains

While the framework is general, several application contexts can be highlighted as examples: in virtual collaboration platforms (e.g. Slack with AI bots, or Microsoft Teams), an AI mediator could watch text chats and task boards, applying the load-balancing logic. In customer support centers with AI routing, the system might match tickets to agents not just by topic but by cognitive style (giving data-heavy issues to detail-oriented reps). In education and training, intelligent tutors could assign students projects aligned to their thinking modes and pace instruction to avoid cognitive overload. Similarly, in healthcare teams using AI assistants, the system might reroute test ordering tasks from overloaded doctors to available nurses, based on each person’s profile. Essentially, any domain where mixed human-AI teams coordinate complex work could benefit from a BDI-aware load manager.

7. Limitations and future work

The framework in this article is a proposal for collaboration between human-AI teams, and although the full code for a “Cognitive Load Balancing Simulation” is provided, empirical validation is still necessary to complement this work. Piloting the framework in a controlled environment is recommended, such as a software development team or a customer support center where the alignment matrix (mappings from tasks to BDI style), the predicted tasks’ load (easier or harder) and AI allocation policies could be contrasted with real individuals’ feedback, tests could compare conditions with and without AI allocation support and analyze whether the proposed framework reduces extraneous load and enhances germane load. Future work could involve user studies to refine the style-task matrix and load metrics and eventually modify or add variables. Our load proxies are also heuristics; machine learning approaches might learn better models of cognitive state from collaboration logs.

The framework assumes steady style profiles and that moderate diversity is always best, however team dynamics are fluid: communication norms or emergent leadership can change load distribution. Integrating physiological or behavioral sensors (like eye-tracking or stress indicators) could improve load estimation beyond the current signals.

Lastly, ethical and organizational factors have been addressed, thus this model lays a foundation for adaptive, neurocognitively informed teaming, opening avenues for interdisciplinary research among cognitive scientists, HCI designers, and AI planners.

7.1. Load estimation via predictive models

Machine learning offers a way to infer cognitive load from everyday digital traces and bio-signals. Studies show that transformer models over audit logs can detect rising effort in real time, while wearables (heart rate, electrodermal activity, etc.) provide complementary signals for stress and workload detection. Interpretable ML over keystroke/mouse patterns has already proven effective for stress detection. Together, these findings point to a multimodal predictive pipeline where log features, communication pace, and error rates can be mapped to continuous load scores, enabling the AI system to anticipate overload and intervene proactively (Naegelin et al., 2023).

7.2. Style-task fit optimization

Instead of a static style-task matrix, recommender-system methods can learn dynamic matches between cognitive styles and task types. Research in crowdsourcing shows that matrix factorization and deep recommenders predict worker-task affinities better than rules, while metadata-augmented and time-aware models adapt to context and user fatigue. By logging task attributes (type, difficulty, completion quality) and user profiles, such models can learn evolving “fit scores” that guide assignments (Dhinakaran & Nedunchelian, 2025). This approach allows the Coordinator/Manager agents to optimize allocations based on predicted load and diversity, transforming the heuristic mapping into a data-driven, adaptive system.

8. Conclusion

A BDI-based framework has been introduced for managing cognitive load in mixed human-AI teams. By combining CLT with Thinking-Style profiles, and using AI to monitor simple behavior cues, work can be dynamically assigned so that each person operates near their optimal zone. The AI acts like a “cognitive load balancer”, shifting tasks from overloaded members and throttling interactions based on context. This approach aims to maximize germane load (productive effort) and minimize unnecessary extraneous load, regardless of domain. In doing so, it treats team cognition itself as a resource to steward, much like a factory manager ensures no machine is overtaxed.

Cognitive load balancing simulation in human-AI teams is publicly available at GitHub, readers are encouraged to explore and test it: https://github.com/Evert75/Thinking_in_Balance

Future work should involve:

- Piloting the framework in real teams: through controlled experiments in collaborative environments. Measuring task performance, load indicators, and subjective experience would help refine the model’s predictive accuracy and practical applicability.

- Refining load indicators: to achieve more sensitive and context-aware estimations of cognitive effort. Integrating behavioral and physiological signals could yield a more dynamic representation of cognitive states. Such refinement would enable the AI manager to balance workload with higher precision and adaptability.

- Developing predictive models for dynamic style-task optimization: Another direction involves developing adaptive AI agents capable of learning individual cognitive patterns over time. By integrating reinforcement learning or predictive analytics, agents could anticipate overload and redistribute tasks proactively.

In the era of pervasive AI collaborators, such human-centered frameworks are crucial. This paper’s contribution is a cohesive theoretical architecture: a dual-module AI (Coordinator/Manager) that learns team members’ styles and loads and uses that knowledge to adapt planning and communication (Liu, 2024; Zhao, 2022). This work is expected to spark further research on cognitive load-aware AI design. Ultimately, the goal is to ensure that neither human nor artificial agents operate at full cognitive capacity; instead, AI augmentation should facilitate seamless cognitive functioning within the team, maintaining optimal performance while preventing burnout.

Annex A – Ethical implications

Profiling team members’ cognitive styles and monitoring behavioral indicators can help balance cognitive load, yet it raises significant ethical concerns. The long‑term viability of such technology depends on addressing privacy, fairness and autonomy considerations.

Risks of profiling and surveillance

- Privacy intrusions

AI systems may collect detailed interaction data (e.g., response latency, errors, task switching) to infer cognitive load. The agentic AI literature warns that current consent procedures often fail; devices like smart speakers record continuous voice data and share it with third parties without explicit user approval, leaving people unaware of how their information is used (Chandra & Navneet, 2025). Similar surveillance in the workplace could erode trust and violate data‑protection laws.

- Loss of autonomy and critical thinking

Over‑reliance on AI recommendations can reduce workers’ critical‑thinking engagement. If profiling leads to prescriptive task assignments, individuals may lose opportunities to develop new skills or challenge themselves.

- Bias and discrimination

Profiling may reinforce stereotypes if certain thinking styles are favored over others. Algorithms trained on incomplete or biased data could misclassify workers and entrench inequities.

- Opaque decision‑making

Employees may not understand how AI systems assign tasks or measure performance. The EU’s AI Act (European Commission, 2024) and regulations such as the CCPA/CPRA and GDPR emphasize the need for transparency and human oversight in high‑risk AI applications (Bahr, 2024).

Principles for responsible use

- Transparent data practices

Organizations should clearly explain what data are collected, how cognitive profiles are inferred and why the information is needed. Transparency about data sources and processing fosters trust and accountability.

- Dynamic and granular consent

Instead of one‑time “click‑through” agreements, consent must be ongoing, contextual and specific to each use case. Interfaces should provide fine‑grained privacy controls (e.g., opt-in/opt-out controls, the ability to delete personal data).

- User agency and override mechanisms

Workers should retain the right to question or override AI decisions. Human‑in‑the‑loop designs insert checkpoints where AI pauses and requests human validation, approval pipelines route outputs for human review. Override controls must be discoverable and intuitive, allowing employees terminate or revert AI actions.

- Explainability and feedback

Providing “decision-trace displays” features (e.g., citations, decision traces or data footprints) will help users understand how task assignments are determined. Clear feedback supports situational awareness and empowers workers to make informed decisions.

- Fairness and bias mitigation

Regularly audit algorithms for disparate impacts across demographics or thinking styles. Use inclusive datasets and involve diverse stakeholders when designing the style-task matrix.

- Legal compliance

Align profiling practices with data‑protection laws (GDPR, CCPA/CPRA) and forthcoming AI‑specific regulations, which categorize high‑risk applications and mandate transparency and human supervision (Bahr, 2024).

- Ethical governance

Establish oversight committees that include workers, managers, ethicists and legal experts. These bodies can review profiling methods, approve use cases and handle grievances.

Designing consent mechanisms

Research on agentic AI emphasizes that consent should be a dynamic, ongoing conversation rather than a one‑off formality. In a workplace load‑balancing system, this could involve:

- Periodic reminders explaining current monitoring practices and offering the opportunity to adjust permissions.

- Contextual prompts before sensitive data are captured or analyzed, especially when AI seeks to infer intimate cognitive states.

- “Decide‑to‑delegate” options, allowing workers to choose whether the AI can autonomously reassign tasks or needs approval beforehand.

These measures respect workers’ autonomy and empower them to decide when and how their cognitive data are used.

Continuous human supervision is essential for maintaining accountability. Human‑in‑the‑loop models provide points where managers can review task assignments, intervene if an algorithm’s decision seems unfair, or adjust the model based on qualitative feedback. Such oversight mitigates risks of over‑automation, ensures that workers are not treated solely as quantitative metrics, and aligns AI interventions with organizational values.

Annex B – Organizational implications

Adopting AI to balance cognitive load and optimize collaborative work has profound organizational impacts. It alters structures, culture and work practices. The most recent literature highlights several key implications.

Work restructuring and emerging roles

- Task redesign and the disappearance of routine roles. Reviews of human‑AI teams note that AI integration reshapes jobs; some tasks vanish while new responsibilities emerge, requiring change‑management strategies to support the transition (Latto et al., 2025).

- Hybrid roles and “dual experts”. Implementing hybrid models demands workers who combine domain expertise with AI skills. For instance, General Electric created “dual experts” who mix machine‑learning skills with operational knowledge, which helped accelerate AI adoption and cut implementation timelines by 30% (Machucho & Ortiz, 2025).

Organizational culture and resistance to change

- Resistance, skill gaps and data silos. As Machucho & Ortiz (2025) mention, many AI projects fail due to resistance to change, lack of AI talent and difficulty scaling beyond pilot projects. Forty‑six percent of SMEs report data silos that hinder interoperability; adopting cloud‑first platforms and standardized APIs can alleviate this.

- Need for data‑driven and collaborative cultures. Successful AI adoption requires cultures that value data‑driven decisions and collaboration between AI systems and humans. AI literacy initiatives and hybrid roles can ease the cultural shift.

- Fostering creativity and empathy. Human‑centered management sources note that creativity and empathy must be nurtured, especially in remote or hybrid teams; they recommend inclusive mentoring and workshops to support the human connection (Steingold, 2025). Experimental evidence shows that collaborating with AI reduces peer interaction and increases loneliness and emotional fatigue, which can lead to counterproductive behavior (Meng et al., 2025).

- Sabotage and acceptance. A 2025 Workplace Intelligence survey found that 31% of employees (41% of Gen Z) sabotage AI initiatives due to fear or dissatisfaction with the tools. As Schawbel (2025) note strategies to improve adoption include a clear organization‑wide AI strategy, selecting suitable vendors and empowering “AI champions” among employees.

Skill development and organizational learning

- Upskilling and reskilling. AI adoption causes job insecurity and pressure to acquire new skills; continuous training and mental‑health programs are crucial (Soulami, 2024).

- Continuous organizational learning. Successful AI integration depends on capabilities in data pipeline management, algorithm development and AI democratization. Machucho & Ortiz (2025) affirm that cross‑functional collaboration among domain experts, business leaders, data scientists and frontline staff fosters rapid experimentation and feedback, leading to productivity gains of up to 40% while maintaining ethics.

- Strategic alignment and performance metrics. Active involvement of the top-management and development of AI‑specific key performance indicators ensure alignment with business strategy and help manage expectations.

Leadership and ethical governance

- Empathetic leadership and emotional support. AI collaboration reduces interpersonal interaction, leading to loneliness; leaders’ emotional support mitigates this and prevents emotional fatigue. Managers should offer social spaces, team‑building and encouragement to meet employees’ emotional needs.

- Governance and cross‑functional committees. Meng et al. (2024) mention that ethical governance is essential. HR teams, ethicists and data scientists should form committees to develop fair AI policies. Techniques such as fairness‑aware machine learning and blockchain auditing have reduced discrimination by 11.3% in financial services (Steingold, 2025).

- Policies and ethical competencies. Machucho & Ortiz (2025) emphasize that a robust framework should include internal AI ethics policies, alignment with organizational values, development of technical and ethical competencies, risk assessments, interorganizational collaboration and performance metrics for ethical AI.

Well‑being, interpersonal relationships and mental health

- Loneliness and emotional fatigue. According to Meng et al. (2025) replacing collaborative tasks with AI reduces peer interaction; this isolation depletes emotional resources and can lead to fatigue and counterproductive behaviors. Organizations should provide social activities, spaces for interaction and psychological support.

- Depression and mental‑health risks. Systematic reviews report that AI‑induced job insecurity leads to stress and anxiety, underscoring the need for mental‑health support and work‑life balance policies (Soulami et al., 2024).

Organizations must embrace a human‑centered approach to AI integration, treating technology as a complement to human capabilities rather than a replacement. Transparent communication about how AI works and why decisions are made is essential for building trust and reducing resistance; early engagement and clarity about evolving roles help ease job insecurity. Finally, successful implementation demands strategic planning: clear goals, performance metrics, comprehensive training, robust ethical guidelines and support mechanisms to ensure that organizations harness AI’s benefits without compromising psychological health or social cohesion.

References

Aggarwal, I., Woolley, A. W., Chabris, C. F., & Malone, T. W. (2019). The impact of cognitive style diversity on implicit learning in teams. Frontiers in Psychology, 10, 112. https://doi.org/10.3389/fpsyg.2019.00112

Bahr, C. (2024). CCPA vs GDPR: key differences and similarities. https://usercentrics.com/knowledge-hub/ccpa-vs-gdpr

Binny, J., Cherian, J., Verghis, A., Varghise, S., Mumthas, S. & Sibichan, J. (2025). The cognitive paradox of AI in education: between enhancement and erosion. Frontiers in Psychology, 16, 1550621. https://doi.org/10.3389/fpsyg.2025.1550621

Brown, L. (2024). Team Cognitive Load: The Hidden Crisis in Modern Tech Organizations. IT Revolution. https://itrevolution.com/articles/team-cognitive-load-the-hidden-crisis-in-modern-tech-organizations

Can, Y., Arnrich, B., & Ersoy, C. (2019). Stress detection in daily life scenarios using smartphones and wearable sensors: A survey. Journal of Biomedical Informatics, 92, 103139. https://doi.org/10.1016/j.jbi.2019.103139

Carey, A. (1997). Cognitive styles of forest service scientists and managers in the pacific northwest. United States Department of Agriculture, General Technical Report, PNW-GTR-414. https://www.fs.usda.gov/pnw/pubs/pnw_gtr414.pdf?utm_source=chatgpt.com

Chandra, J. & Navneet, S. (2025). Advancing responsible innovation in agentic ai: a study of ethical frameworks for household automation. arXiv preprint https://arxiv.org/pdf/2507.15901v1

Clark, A., & Chalmers, D. (1998). The Extended Mind. Analysis, 58(1), 7-19. http://www.jstor.org/stable/3328150

Clark, R., & Mayer, R. (2016). E‐Learning and the science of instruction: Proven guidelines for consumers and designers of multimedia learning (4th ed.). Wiley

Corral, E. (2020). Neurosciences applied to the integration of work teams: Software development projects. The Anáhuac Journal, 20(2), 38-79. https://doi.org/10.36105/theanahuacjour.2020v20n2.02

Daugherty, P., & James, H. (2019). Human + Machine: Reimagining work in the age of AI, (1st ed.). Harvard Business Review Press

Dhinakaran, K., & Nedunchelian, R. (2025). An automated recommendation system for crowdsourcing data using improved heuristic-aided residual long short-term memory. Computational Intelligence, 41(1), 824-835. https://doi.org/10.1111/coin.70017

European Commission. (2024). EU Artificial Intelligence Act. https://artificialintelligenceact.eu

Gkintoni, E., Antonopoulou, H., Sortwell, A., & Halkiopoulos, C. (2025). Challenging cognitive load theory: the role of educational neuroscience and artificial intelligence in redefining learning efficacy. Brain Sciences, 15(2), 203. https://doi.org/10.3390/brainsci15020203

Herrmann, N. (1989). The Creative Brain. Lake Lure: Brain Books

Herrmann, N., & Herrmann-Nehdi, A. (2015). The whole brain business book: unlocking the power of whole brain thinking in organizations, teams, and individuals (2nd ed.). McGraw-Hill

Hong, L., & Page, S. E. (2004). Groups of diverse problem solvers can outperform groups of high-

America, 101(46), 16385-16398. https://doi.org/10.1073/pnas.0403723101

IBM. (2024, April 9). Organizations work to build public trust. Bangkok Post. https://www.bangkokpost.com/business/general/2773239/ibm-insists-organisations-work-to-build-public-trust

Liu, S., Shrutika, F., Zhang, B., Huang, Z., Sukhatme, G., & Qian, F. (2024). Effect of adaptive communication support on LLM-powered human-robot collaboration. arXiv. https://doi.org/10.48550/arXiv.2412.06808

Lui, H. (2024, may 26). Sheridan’s levels of autonomy. Blog on creativity, marketing, and the human condition. https://herbertlui.net/sheridans-levels-of-autonomy

Kim, S., Warner, B. C., Lew, D., Lou, S. S., & Kannampallil, T. (2024). Measuring cognitive effort using tabular transformer-based language models of electronic health record-based audit log action sequences. Journal of the American Medical Informatics Association, 31(10), 1527. https://doi.org/10.1093/jamia/ocae171

Kyriakou, K., Resch, B., Sagl, G., Petutschnig, A., Werner, C., Niederseer, D., Liedlgruber, M., Wilhelm, F. H., Osborne, T., & Pykett, J. (2019). Detecting moments of stress from measurements of wearable physiological sensors. Sensors, 19(17), 3805. https://doi.org/10.3390/s19173805

Latto, C., Richter, A. & Tate, M. (2025). Human-AI Teams’ Impact on Organizations – A Review. Proceedings of the 58th Hawaii International Conference on System Sciences. 2025. https://scholarspace.manoa.hawaii.edu/server/api/core/bitstreams/7f4d7d04-f097-472c-8fcb-65a36b925527/content

Machucho, R., & Ortiz, D. (2025). The impacts of Artificial Intelligence on business innovation: A comprehensive review of applications, organizational challenges, and ethical considerations. Systems, 13(4), 264. https://doi.org/10.3390/systems13040264

Meng, Q., Wu, T. J., Duan, W., & Li, S. (2025). Effects of employee-Artificial Intelligence (AI) collaboration on Counterproductive Work Behaviors (CWBs): Leader emotional support as a moderator. Behavioral Sciences (Basel, Switzerland), 15(5), 696. https://doi.org/10.3390/bs15050696

Miao, J., Davis, J., Pritchard, J. & Zou, J. (2025). Paper2Agent : Reimagining research papers as interactive and reliable AI Agents. Department of Computer Science, Stanford University, 2025. arXiv preprint https://arxiv.org/abs/2509.06917v1

Naegelin, M., Weibel, R., Kerr, J., Schinazi, R., La Marca, R., Von Wangenheim, F., Hoelscher, C. & Ferrario, A. (2023). An interpretable machine learning approach to multimodal stress detection in a simulated office environment. Journal of Biomedical Informatics, (139), 104299. https://doi.org/10.1016/j.jbi.2023.104299

NeuronTest. (2025). NeuronTest: Brain Dominance Test (Version 1.01) [Mobile app]. App Store. https://apps.apple.com/mx/app/neurontest/id6752271091

Pérez, M., Rodríguez B., Delgado, G., Del Pilar, R., Granda, D. & Tamayo, V. (2023). Learning styles, study habits, project-based learning in research methodology. Referencia Pedagógica, 11(3), 120-135. http://scielo.sld.cu/scielo.php?script=sci_arttext&pid=S2308-30422023000300120&lng=es&tlng=en

Pryke, B. (2025). How to use Jupyter Notebook: A beginner’s tutorial. Dataquest, 2025. https://www.dataquest.io/blog/jupyter-notebook-tutorial

Quinn, J., & Moody, J., (2020). Computational Modeling, In P. Atkinson, S. Delamont, A. Cernat, J.W. Sakshaug, & R.A. Williams (Eds.), SAGE Research Methods Foundations. https://doi.org/10.4135/9781526421036945708

Rao, H. M., Smalt, C. J., Rodriguez, A., Wright, H. M., Mehta, D. D., Brattain, L. J., Edwards, H. M., 3rd, Lammert, A., Heaton, K. J., & Quatieri, T. F. (2020). Predicting Cognitive Load and Operational Performance in a Simulated Marksmanship Task. Frontiers in Human Neuroscience, 14, 222. https://doi.org/10.3389/fnhum.2020.00222

Schawbel, D. (2025). AI Adoption Study. Workplace Intelligence. https://workplaceintelligence.com/ai-adoption-study

Shneiderman, B. (2022). Human-Centered AI, (1st ed.). Oxford University Press

Soulami, M., Benchekroun, S., & Galiulina, A. (2024). Exploring how AI adoption in the workplace affects employees: A bibliometric and systematic review. Frontiers in Artificial Intelligence, 7, 1473872. https://doi.org/10.3389/frai.2024.1473872

Steingold, L. (2025). The state of work 2025: AI + HI And the trends. People Managing People – Workforce Management. https://peoplemanagingpeople.com/workforce-management/ai-and-human-intelligence

Sternberg, R. J. (1999). Estilos de pensamiento, Claves para identificar nuestro modo de pensar y enriquecer nuestra capacidad de reflexión: Paidós

Sweller, J. (1988). Cognitive load during problem solving: Effects on learning. Cognitive Science, 12(2), 257-285. https://doi.org/10.1207/s15516709cog1202_4

Wang, D., Churchill, E., Maes, P., Fan, X., Shneiderman, B., Shi, Y. & Wang, Q. (2020). From Human- Human Collaboration to Human-AI Collaboration: Designing AI Systems That Can Work Together with People. CHI Conference on Human Factors in Computing Systems, 2020, 1-6. https://dl.acm.org/doi/abs/10.1145/3334480.3381069

Wilson, G. (2002). An analysis of mental workload in pilots during flight using multiple psychophysiological measures. The International Journal of Aviation Psychology, 12(1), 3-18. https://doi.org/10.1207/S15327108IJAP1201_2

Woolley, A., Chabris, C., Pentland, A., Hashmi, N., & Malone, T. (2010). Evidence of a collective intelligence factor in the performance of human groups. Science, 330, 686-688. https://www.science.org/doi/10.1126/science.1193147

Zhang, Y., Robertson, P., Shu, T., Hong, S., & Williams, B. (2024). Risk-bounded online team interventions via theory of mind. 2024 IEEE-ICRA, 964-970, https://ieeexplore.ieee.org/document/10609865

Zhao, M., Simmons, R., & Admoni, H. (2022). The role of adaptation in collective human-AI teaming. Topics in Cognitive Science, 17(2), 291-323. https://doi.org/10.1111/tops.12633

[1] In this paper, the concepts of thinking style and cognitive style are used as synonyms.

[2] The Brain Dominance Instrument (BDI), developed by Herrmann (2015), is a test to measure and describe people’s thinking preferences.

[1] evert.cp@gmail.com